This chapter discusses important features for maintaining data integrity. The c‑tree Single User mode and FairCom Server both offer full on-line transaction processing and file mirroring. These features and more are discussed in this chapter.

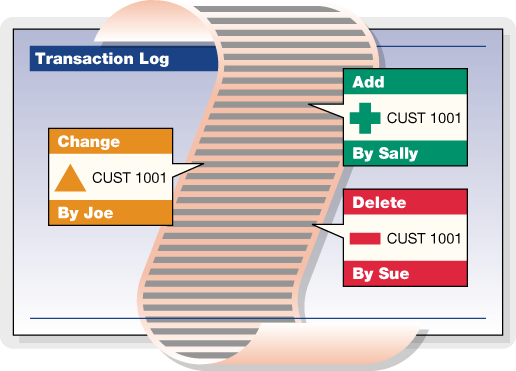

Transaction Processing Concepts

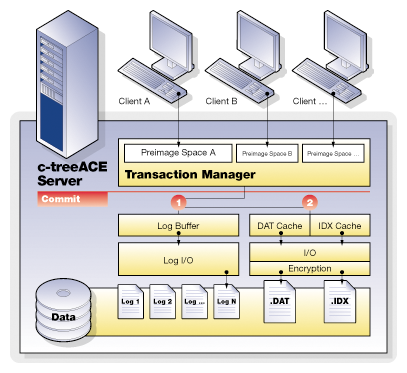

FairCom DB Transactional Technology

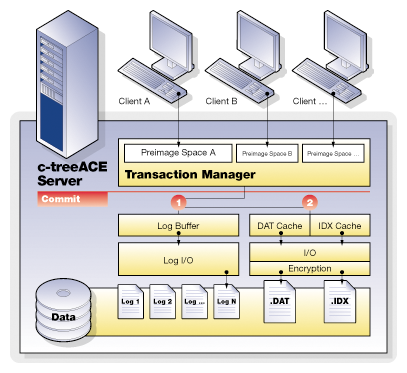

The Transactional Technology layer includes the low-level functions to control and maintain transaction integrity, guaranteeing complete recovery of data should an unexpected outage occur, such as hardware or power failures. With the FairCom DB transaction logging facility, many advanced features can be enabled such as dynamic dumps with complete roll forward capability and complete index data backup; replication to failover sites; and transaction history for auditing.

Transaction control provides two extraordinarily powerful tools for your applications - atomicity of operations, and recovery of data. In fact, there are four key components of any complete transactional system, collectively referred to as ACID:

Atomicity - Grouping of database update operations into all-or-nothing commits;

Consistency - The database remains in a valid state due to any transaction;

Isolation - Concurrent transactions do not interfere with each other outside of well-defined behaviors;

Durability - Committed transactions are guaranteed to be recoverable, even if the data and index files are inconsistent.

FairCom DB is fully ACID compliant and as such, FairCom DB provides the highest levels of transaction integrity.

FairCom DB transaction control can also be enabled for many legacy applications allowing many additional advanced features to be available. Automatic transactions, while not providing complete atomic operations, do allow full recovery, and an ability to add replication for fault tolerant systems and dynamic dumps of index data.

Why Do We Need Transaction Processing?

Let’s examine an example, entering an invoice into an accounting system. When we create an invoice, several data files may be updated. The customer master file may have a field for each customer that keeps the current balance. This balance must be increased if we issue an invoice. There is an invoice file that has a record added to it. There may be a separate invoice detail file, keeping a record of each line on the invoice. In addition, each invoice detail will affect the balance of an inventory item. All of these files must be in sync - the customer balance must match the total of the open invoices, the detail lines must all be present, and the inventory balances must be accurate as affected by the invoices and other activities.

What happens if the computer crashes while these files are being updated? You could have some of the files already updated, but others not. For instance, the customer and invoice files could be updated, but the inventory records may not. Depending on the way the operating environment works, those records that already exist (the customer and inventory records) could be updated, but the records that are to be added to the file (the invoice and invoice details) may not be saved yet. In any case, you now have files that are not synchronous. Adjusting the files to bring them back into balance can be difficult, as you may not be sure which files have been updated and which have not. Often your only choice is to go to a backup copy of the files, where you know that all of the files are synchronous. Unfortunately, these backups could be days out of date (or more!), and it can take time to make them current.

It doesn’t have to be a disaster situation to cause problems, either. The file updating in our example requires a number of files and records, and the user has to have the proper file and record locks in order to be able to complete the processing. What if you find that you are part way through the updating process and you cannot get one of the records to update? For instance, if you have a large number of details in the invoice, you may find that part way through the process one of the inventory records is locked by another user. Do you just skip this item? If you have done a large number of updates already, for the other details in the invoice, how do you go back?

The answer to these problems is transaction processing with FairCom DB single-user mode or the FairCom Server.

Atomicity

Atomic operations allow an all-or-nothing approach to database updates. Grouping operations together provides for both business unit cohesiveness, as well as performance. Multiple files take part within a single transaction. Should any operation within the transaction fail, this entire sequence can be immediately aborted. Compare this to maintaining individual file updates under your own control.

To enhance the all-or-nothing atomic operation, complete savepoint and rollback features allow you to quickly move within a transaction as conditions change. Errors can be handled and operations adjusted without completely starting over.

In addition, FairCom DB offers extremely fine grained locking mechanisms both within and between transactions. The ability to keep locks from before a transaction begins, and maintain locks after a transaction commits gives FairCom DB developers control unavailable in most other database technologies. This yields a key advantage to high performance and acutely tuned applications.

Journaling

FairCom's transaction management system maintains files that record various states of information necessary to recover from unexpected problems. Information concerning ongoing transactions is saved on a continual basis in these transaction log files. A chronological series of transaction logs is maintained during the operation of the application. Transaction logs containing the actual transaction information are saved as standard files. They are given names in sequential order, starting with L0000001.FCS (which can be thought of as active transaction log, number 0000001) and counting up sequentially (that is, the next log file is L0000002.FCS, etc.).

By default, the transaction management logic saves up to four active logs at a given time. When there are already four active log files and another is created, the lowest numbered active log is either deleted or saved as an inactive transaction log file, depending on how FairCom DB is configured.

Every new FairCom Server session begins with checking the most recent transaction logs (the most recent 4 logs, which are always saved as “active” transaction logs) to determine if any transactions need to be undone or redone. If so, these logs are used to perform automatic recovery.

The FairCom DB transaction logs serve as the ultimate control point for many important FairCom DB features:

- Automatic Recovery on startup

- Dynamic Backups

- Roll forward/backward capabilities

- Replication

- Change History

Automatic Recovery

Once you establish full transaction processing using the ctTRNLOG file mode, you can take advantage of the automatic recovery feature. Atomicity will generally prevent problems of files being partially updated. However, there are still situations where a system crash can cause data to be lost. Once you have signaled the end of a transaction, there still is a “window of vulnerability” while the application is actually committing the transaction updates to disk. In addition, for speed considerations some systems may buffer the data files and indexes, so that updates may not be flushed to disk immediately. If the system crashes, and one of these problems exist, the recovery logic detects it. If you set up the system for automatic file recovery, the recovery logic automatically resets the database back to the last, most complete, state that it can. If any transaction sets have not been completed, or “committed”, they will not effect on the database.

FairCom offers the most complete set of transaction processing functions and capabilities of any file management product on the market today. By using these functions properly, we can provide both atomicity and automatic recovery.

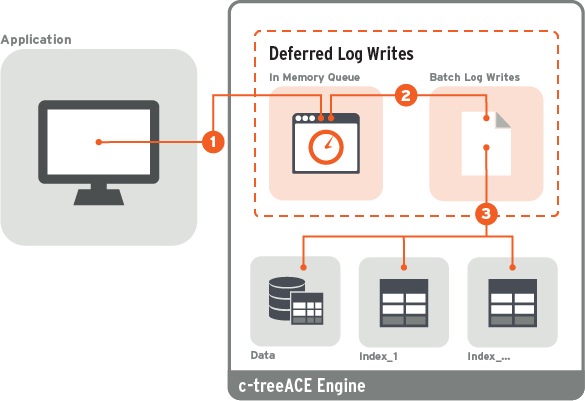

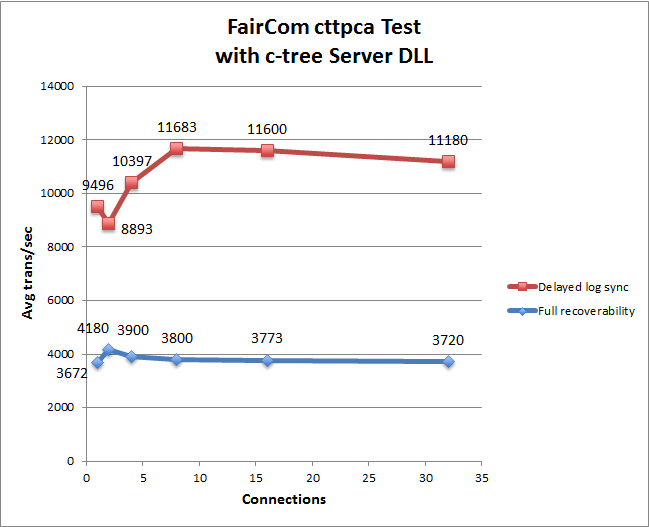

Transaction Grouping

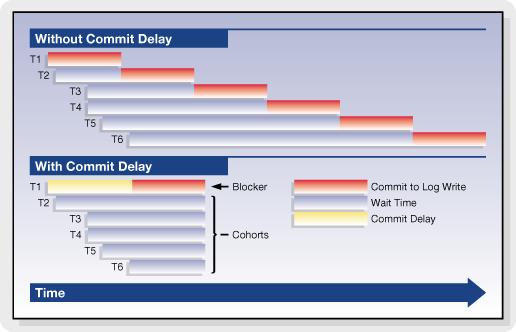

The FairCom Server supports grouping transaction commit operations for multiple clients into a single write to the transaction logs. Transaction grouping, or commit delay, is a high performance optimization for environments with large numbers of clients sustaining high transaction rates. Commit delay takes advantage of the overhead involved in flushing a transaction log. The performance improvement per individual thread may result in only milliseconds or even microseconds, however, multiplied hundreds of times over hundreds of threads and thousands of transactions per second, this becomes quite significant.

Effect of commit delay on transaction commit times for multiple threads:

FairCom has supported commit delay logic for many years and continues to optimize and enhance the effectiveness of the transaction grouping logic. For example, in recent FairCom DB editions, the commit delay is self-adjusting and numerous heuristics and statistics have been added to constantly monitor and report the effectiveness of the current commit delay state.

Basic Transaction Processing

Many of the benefits of transaction processing can be achieved very easily. This section will introduce you to the basics of establishing transaction processing. For many users, this will be all that is needed. Detailed explanations of the functions used here will be given later, including additional functions and other options.

Transaction File Modes

When you are creating your data files and indexes, you have three options in relation to transaction processing. These options are established by using the appropriate value in the file mode for the file that you are creating.

ctTRNLOG

If you use a file mode of ctTRNLOG, you will get the full benefit of transaction processing, including both atomicity and automatic recovery. If you are not sure of what mode to use, and you do want to use transaction processing, then use this mode.

ctPREIMG

The ctPREIMG file mode implements transaction processing for a file but does not support automatic file recovery. Files with ctPREIMG mode do not take any space in the system transaction logs.

ctLOGIDX

Transaction controlled indexes with this file mode are recovered more quickly than with the standard transaction processing file mode ctTRNLOG. This feature can significantly reduced recovery times for large indexes and should have minimal effect on the speed of index operations.

ctLOGIDX is only meaningful if the file mode also includes ctTRNLOG. Note that ctLOGIDX is intended for index files only! Do not use ctLOGIDX with data files.

No Transaction Processing

If you do not use either ctPREIMG or ctTRNLOG then the file will not be involved in transaction processing in any way. If you are using transaction processing then you should only do this with files that do not need to be kept as a part of your database. If you are NOT using transaction processing, consider reviewing the ctWRITETHRU file mode in Data and Index Files.

Please note that you should only use one or the other of ctPREIMG and ctTRNLOG.

Create Files

When you create a data file and index, you must specify if that file is to be involved with transaction processing. To get the full benefits, including atomicity and automatic recovery, every file that requires recoverability should have the file mode of ctTRNLOG. Just OR it in with the other file mode values.

Even a library that does not support transaction processing (e.g., standalone multi-user or single-user NO_TRANPROC) can create files that will be transaction ready when opened in a TRANPROC environment. The files automatically revert to NO_TRANPROC when returned to a NO_TRANPROC environment (in which case Begin, Commit, Abort behave as LockISAM calls). To create such a file in a NO_TRANPROC environment simply set the file mode to include either ctTRNLOG or ctPREIMG at create time.

There are two strategies to create a file in a TRANPROC environment that can be used in a NO_TRANPROC environment:

- Set the file mode to include either ctTRNLOG or ctPREIMG. After creating the file, but before closing it (which ensures the file is still opened exclusively), call UpdateHeader(filno, TRANMODE, ctXFL_ONhdr) or UpdateHeader(filno, PIMGMODE, ctXFL_ONhdr), respectively. Please be aware that you need to call UpdateHeader() for each physical file just created: data and indexes.

- Set the file mode to include either ctTRNLOG or ctPREIMG and including TRANMODE (if ctTRNLOG) or PIMGMODE (if ctPREIMG) in the x8mode member of the extended create block.

An index file that does not include either ctTRNLOG or ctPREIMG in its filemode CANNOT be changed via a call to UpdateHeader(). It must be rebuilt instead.

A call to UpdateFileMode() that changes between ctTRNLOG and ctPREIMG, or ctPREIMG and ctTRNLOG, will have the TRANMODE and PIMGMODE information updated automatically. However, a call to UpdateHeader() to set or turn on TRANMODE or PIMGMODE that is incompatible with the existing file mode or auto switch mode results in a TTYP_ERR (99).

For example, if TRANMODE is already turned on, then PIMGMODE cannot also be turned on. If the file mode is ctPREIMG, TRANMODE cannot be turned on. It is possible to turn off TRANMODE or PIMGMODE by using UpdateHeader() with ctXFLOFFhdr.

A superfile member cannot set TRANMODE or PIMGMODE unless the host has the same settings. UpdateHeader() returns SRCV_ERR (419) if the settings don’t match.

Files can also be created as “Transaction Dependent”, allowing the create to be aborted. See Transaction Dependent Creates and Deletes.

Begin Transactions - Begin()

You will need to decide on logical groups of file updates that can be delimited as transactions. Record locks are held on updated records for the duration of the transaction, so you don’t want to make the transaction group too large. On the other hand, you do not want to make the transaction group too small. You must contain all of the related updates in one transaction. Using our example from above, you don’t want to have the transaction group involve more than one invoice. You also don’t want it to involve less than a whole invoice.

To begin a transaction make the following call to the Begin() function:

Begin(ctTRNLOG | ctENABLE);The ctENABLE parameter eliminates the need for a call to LockISAM(). A LockCtData() call attempting to unlock a record that is part of transaction returns NO_ERROR (0), but sets sysiocod to UDLK-TRN (-3), indicating the lock will be held until the end of the transaction.

Begin

You use the Begin() function to mark the beginning of the transaction. This function will return a transaction number that you may want to use in some situations. You will pass a mode to Begin, which can be either ctTRNLOG or ctPREIMG.

The file modes of all files updated during a transaction must be compatible with the mode used in the Begin() call.

- If the Begin() mode is ctPREIMG, all files updated during the transaction must have been created with a file mode of ctPREIMG, or no transaction processing at all. If an updated file was created with a file mode of ctTRNLOG then the update will return the TTYP_ERR (99) error code. You cannot skip transaction logging if the files have been set up for transaction logging.

- If the Begin() mode is ctTRNLOG, transaction logging for a file will not take place if the file was created with a file mode of ctPREIMG or no transaction mode at all.

If you created a file with a file mode of ctTRNLOG, then you must use ctTRNLOG with your call to Begin() (if the file is to be updated during the transactions).

There are other values that you can OR in with the transaction mode.:

- ctENABLE: Automatically invokes LockISAM( ctENABLE ) for all records in the transaction.

- ctREADREC: Automatically invokes LockISAM( ctREADREC ) for all records in the transaction.

- ctLK_BLOCK: Used in addition to ctENABLE or ctREADREC converts them to ctENABLE_BLK or ctREADREC_BLK, respectively.

ctSAVENV: With this mode, each Begin() and SetSavePoint() saves the current ISAM record information so a subsequent Abort() or RestoreSavePoint() automatically restores the current ISAM record information.

End Transaction - Commit()

When you have finished all of your updates for this transaction, you must end it to “commit” the transaction. Until you have done this, none of the actual transaction updates will occur. The Commit() function is used to do this. In addition to committing the transaction, Commit() can free all locks created during the transaction.

Commit(ctFREE);That is all you have to do to get complete transaction processing protection in your application using FairCom DB. There are, however, other variations on how you can use these functions, as well as additional functions.

Note: If an file update error has occurred during the transaction, and that error has not been resolved properly, FairCom DB will not allow you to successfully commit the transaction. This preserves the integrity of your data.

End

The function Commit() will end the transaction, and commit it. This means that all of the updates that have taken place since the last Begin() call will actually take place. Commit() requires a parameter, either ctFREE or KEEP. ctFREE frees all ISAM locks held for the transaction. KEEP keeps all the locks, though they can be released later with LockISAM( ctFREE ).

Note: If a file update error has occurred during the transaction, and that error has not been resolved properly, FairCom DB will not allow you to successfully commit the transaction. This preserves the integrity of your data.

Record Locking

Record locking requirements are the same with and without transaction processing. Records to be updated or deleted should be locked. Use of ctCHECKLOCK as a file mode will ensure that no updates are performed without locks.

Begin

When you invoke Begin() you can OR the constants ctENABLE or ctREADREC into the transaction mode. If you want the locks to block when they are not available, also OR ctLK_BLOCK into the transaction mode. This automatically invokes LockISAM(), so all ISAM update functions automatically lock records. During the transaction, you can modify the ISAM lock mode by further calls to LockISAM().

Low-level functions still require explicit record locks with LockCtData().

Commit

At the end of the transaction you have different options in the Commit() call:

- You can use a mode of ctFREE to free all locks, both ISAM and low-level locks, automatically (all locks initiated before or during the transaction).

- You can use a mode of ctKEEP to hold onto all current record locks.

- Use ctKEEP_OUT to release only locks obtained during the transaction or on records altered during the transaction, keeping most or all locks obtained before the transaction.

- Use ctKEEP_OUT_ALL to keep all locks obtained before the transaction.

To free any kept locks you must make a LockISAM( ctFREE ) call, or a LockCtData( ctFREE ) call for each low-level record lock.

Aborting a Transaction

There may be situations where you do not want to commit the transaction. Rather, you will want to throw the whole thing out as if it never took place. For instance, you are taking a customer order over the telephone, updating the files as the customer requests certain items. However, the customer decides that the order should not be placed at all, and cancels out. Without transaction processing, you would be faced with the difficult process of going back and reversing out all of the updates that you have done. Alternately, you could build your system so that you don’t actually do the updates, you just keep them in a temporary file. This can also be a problem, as you are not able to place a commitment on a particular inventory item, and when you actually decide to place the order you may find that the items you promised were consumed by another order.

Abort and AbortXtd

This process is made simple by transaction processing. Start the order with a Begin() call, process the order details as you go, and issue a Commit() call to commit the updates when the customer decides to place the order. However, if the customer decides to back out, you can use the Abort() call to abort the transaction. This will abort all of the file updates since the last call to Begin(), as well as releasing all locks. Abort() can be used whether Begin() uses the ctPREIMG or ctTRNLOG mode.

Note: Starting a transaction in an interactive mode will cause long held locks on those items updated, but not yet committed.

Changes in V11.5

Note: This is a Compatibility Change.

When aborting a transaction on the master server failed, we previously returned immediately rather than aborting the transaction on the local server. Now, in this situation, we mark the master server transaction as no longer active and then we abort the local transaction.

When phase 1 of the master server commit fails, we did not reset the active transaction indicator for the master server. This caused us to believe that the transaction on the master server was still active, even though it was not. Now we properly reset the active transaction indicator in this situation.

Savepoints

There are times you want to abort a portion, but not all, of a transaction. You may be processing several optional paths of a program, going down one branch, then backing out and trying another branch. It may be possible that you don’t want any of the updates to occur until you are completely finished, but you want the flexibility to back out a part of the updates. Another possibility would be if you have run into some form of update error, such as an AddRecord() failing due to a duplicate key. You would want to back up to a prior point, correct the problem, and continue. The FairCom Server lets you implement this by using savepoints.

SetSavePoint

A savepoint is a temporary spot in the transaction that you may want to back up to without having to abort the entire transaction. During a transaction, when you want to put a place mark in the process, issue a SetSavePoint() call. This does not commit any of the updates. The function returns a savepoint number, which you should keep track of. You can make as many SetSavePoint() calls as you wish during a transaction, and each time you will be given a unique savepoint number.

RestoreSavePoint

When you decide that you want to back up to a savepoint, issue a RestoreSavePoint() call. You will pass this function the savepoint number that you want to back up to. This returns you to the point at which you issued the specified SetSavePoint() call, without aborting the entire transaction.

Record locks that are held on actions after that savepoint will be released, unless they were also locked by actions prior to the savepoint.

ClearSavePoint

ClearSavePoint() removes a savepoint WITHOUT UNDOING the changes made since the savepoint. Calling ClearSavePoint() puts pre-image space in the same state as if the most recently called savepoint had never been called. By comparison, RestoreSavePoint() cancels changes made since the last savepoint, but does NOT remove this savepoint.

ReplaceSavePoint

ReplaceSavePoint() establishes a savepoint while at the same time clearing the previous savepoint providing a "moving" savepoint within a transaction. Only the most recently established savepoint can be restored to. To restore to this savepoint, call RestoreSavePoint(-1).

Errors in Transactions

The primary purpose of Transaction Processing is to protect the integrity of your data. This is particularly important if you are updating multiple files in one transaction. Earlier in this chapter, we used the example of an invoicing system, where we are updating a customer file, an invoice master and detail file, and an inventory file. We want either the entire invoice process to complete, or none of it. A partially entered invoice is not acceptable.

If an update error occurs, an error flag is set. If you try to use Commit() to commit this transaction, an error code will be returned and the transaction will be aborted.

If an update error occurs we typically have two choices: abandon the entire transaction, or go back to a savepoint and try again. The choice is yours, and the correct choice may depend on what error occurred and how you can correct it.

Abort

To abandon the entire transaction you will use the Abort() function. This will throw away all update actions that occurred since the initial Begin() call. You can start another transaction, or take some corrective action.

RestoreSavePoint

If you have been setting savepoints with the SetSavePoint() function, you can back up to an appropriate place in your transaction with the RestoreSavePoint() function. This allows you to go back part way into the transaction and then continue on, without throwing everything away.

File Operations During Transactions

Creating and opening files is not permitted during a transaction unless the file is created with the Extended File Mode including ctTRANDEP, which may only be created during a transaction. Therefore, all files, without the ctTRANDEP file mode, to be accessed within a transaction must be opened prior to the Begin() call.

Closing Files During Transaction Processing

When closing or deleting a file during a transaction, the current default behavior fails the attempted close or delete with CPND_ERR (588).

To return to the previous default behavior, (automatically abort the transaction and close/delete the file), add #define ctBEHAV_AbortOnCLOSE to ctoptn.h. For the c‑tree Server, add the following to the FairCom Server configuration file:

COMPATIBILITY ABORT_ON_CLOSE

Optional Defer of Close Until Transaction Commit/Abort

FairCom DB supports the ability to defer file closes and deletes during transactions until the transaction is completed or aborted. This allows more flexible file handling without worrying about whether a transaction-controlled file was currently involved within a transaction. SetOperationState() can change the behavior of a file close request when the file has been updated as part of a still active transaction. Turning on OPS_DEFER_CLOSE causes defers a file close or delete until the transaction is committed or aborted.

Updates by other clients do not affect the “update status” of a file for a client who has not updated the file within a transaction.

For example:

OPEN FILE A client #1

OPEN FILE A client #2

OPEN FILE B client #2

SETOPS (OPS_DEFER_CLOSE, OPS_STATE_ON); client #1

SETOPS (OPS_DEFER_CLOSE, OPS_STATE_ON); client #2

TRANBEG client #1

TRANBEG client #2

READ FILE A client #1

UPDATE FILE A client #2

CLOSE FILE A (succeeds without defer) client #1

CLOSE FIL A (deferred) client #2

UPDATE FILE B (suceeds without defer) client #2

TRANEND (causes a CLOSE ON FILE A) client #2

CLOSE FILE B client #2

TRANEND client #1This example shows that client #2’s update does not cause a defer when client #1 closes file A within a transaction in which client #1 had not updated file A, but client #2’s close is deferred.

- A request to reopen the file with the same file number within the same transaction will be honored.

- An attempt to reopen the file with a different file number within the same transaction will be honored, provided there is no overlap with the original file numbers due to index members.

- An attempt to open a different file with the same file number or overlapping file numbers will fail.

For example:

OPEN A as file #2 (where A has two additional index members)

OPEN B as file #10

SETOPS(OPS_DEFER_CLOSE,OPS_STATE_ON)

TRANBEG

UPDATE A

CLOSE A (deferred: file #s 2,3 and 4 still in use by A)

UPDATE B

OPEN A as file #2 (succeeds since same file reusing file numbers)

UPDATE A

CLOSE A (deferred: file #s 2,3 and 4 still in use by A)

UPDATE B

OPEN C as file #2 (fails because of defer on file A)

OPEN A as file #0 (fails because of overlap with itself)

OPEN A as file #9 (fails because of overlap with B)

OPEN A as file #5 (succeeds)

OPEN C as file #2 (succeeds because file A now reassigned)There are restrictions on file mode changes between a deferred close and a subsequent reopen within the same transaction. A file originally opened in exclusive mode can be reopened in ctSHARED mode. A file originally opened in ctSHARED mode must be reopened in ctSHARED mode. ctREADFIL is not allowed on a reopen.

Notes

- ctEXCLUSIVE reopen of a deferred close of a ctSHARED file - If a file opened ctSHARED has its close deferred, and an attempt is made to “reopen” the file in ctEXCLUSIVE mode, the reopen will now succeed under the Server if no one else has the file opened. For example, the second open below succeeds only if no other user has the file open.

datno = OpenFileWithResource(,ctSHARED);

Begin;

DO_Stuff();

CloseIFile(datno);

datno = OpenFileWithResource(,ctEXCLUSIVE);

Commit();Here are the expected results for deferred close and subsequent open requests:

| Current status | Same user | Another user |

|---|---|---|

| Deferred close after ctEXCLUSIVE open | May reopen the file in ctEXCLUSIVE or ctSHARED mode | Cannot open file |

| Deferred close after ctSHARED open | May reopen the file in ctSHARED mode. May reopen the file in ctEXCLUSIVE mode if no other user has the file open. | May open the file in ctSHARED mode |

- File number change after deferred close - In single-user TRANPROC, a deferred close followed by a reopen with a different file number returns CHGF_ERR (645). To avoid this error, always re-open using the same file number. For ISAM files, this is easily accomplished using OpenFileWithResource() with a -1 for filno or using OpenIFile() with an IFIL structure setting dfilno to -1.

Defer File Delete

If OPS_DEFER_CLOSE is on, a file delete on a file updated by a still active transaction will be deferred until the commit or abort of the transaction. An attempt to reopen the file within the same transaction results in a FNOP_ERR (12) as if the file were actually gone. An attempt to re-create the file within the same transaction will not succeed because the file has not yet been deleted.

Transaction Dependent Creates and Deletes

A major transaction-processing feature, known as Transaction Dependent Creates/Deletes, supports the creation and deletion of Extended files under transaction control. For Standard files, the physical creation and deletion of files from disk is handled outside the scope of transaction control. In other words, Standard files do not support such operations as “aborting a create” or “rolling back a delete.”

If you are using ISAM to create the files with transaction control, Transaction Dependent Creates/Deletes are a best practice. (If you are using SQL to create the files with transaction control, this mode is automatically used.) The main benefit of this mode is helping automatic recovery to know which files should exist. When files are created without this mode, you may need to use SKIP_MISSING_FILES YES during recovery, which is discouraged except when specific error messages indicate it is necessary.

File creates and deletes may be part of a transaction and are subject to being undone in case of crashes, savepoint restores, and aborts. This is accomplished with the extended file mode ctTRANDEP. An Extended file with ctTRANDEP in its extended file mode is assumed to utilize transaction-dependent creates and deletes and must be created within a transaction. There is no special function call for transaction dependent creates or deletes.

If a different client attempts to open or create a file pending delete, DPND_ERR (643) is returned to signify that opens/creates must await the commit or abort of the delete.

To utilize a transaction-dependent delete with a file not created with ctTRANDEP, it will be necessary to update the file header to include the ctTRANDEP bit in the x8mode member of the extended header.

To support the rollback of a transaction-dependent file delete, which requires FairCom DB to maintain a copy of the old file after the delete is committed, use the ctRSTRDEL extended file mode. ctRSTRDEL automatically implies ctTRANDEP, but the reverse is not true. When only ctTRANDEP is used, the overhead of storing copies of an old file after its delete is committed is avoided.

A transaction-dependent create is only supported by the Xtd8 create routines, and requires an extended file mode with ctTRANDEP and/or ctRSTRDEL included. To perform a transaction-dependent create of a Standard FairCom DB file, call one of the create routines with ctNO_XHDRS and either ctTRANDEP or ctRSTRDEL included in the extended file mode. Including ctNO_XHDRS in the extended file mode causes the create routines to create a Standard file. If ctNO_XHDRS conflicts with other extended file mode bits, then the create will fail with XCRE_ERR (669).

To convert an existing Standard file to support transaction-dependent deletes, use calls of the form:

UpdateHeader(filno,ctTRANDEP,ctXFL_ONhdr);or

UpdateHeader(filno,ctRSTRDEL,ctXFL_ONhdr);However, these UpdateHeader() calls cannot convert a Standard file to an Extended file.

- Dynamic Dumps - A file with ctTRANDEP in its extended file mode that is deleted after the effective dump time will be sure to exist at the time this particular file is dumped. This accomplished by making the delete wait until the file is dumped.

- PermIIndex8() and TempIIndexXtd8() support ctTRANDEP creates. Without ctTRANDEP creates, these routines cannot be called within a transaction. With ctTRANDEP creates, they MUST be called within a transaction.

- DropIndex() marks a transaction-controlled index for deletion by setting keylen to -keylen until the drop is committed. A GetIFile() call after the DropIndex() call, but before the Commit() or Abort() will return this interim setting.

Note: As of version 13.0.3, only the creator of a transaction-dependent file can open it in shared mode before the associated transaction commits. Once the transaction commits, the file automatically transitions to shared mode for all users.

In versions before 13.0.3, shared mode was not usable before the transaction was committed.

Transaction Processing Logs

The FairCom transaction management logic creates special system files to record various kinds of information necessary to recover from problems. The majority of this section applies to both the FairCom Server and single-user (stand-alone) transaction processing enabled applications; therefore, the generic term application refers to both the FairCom Server and a single-user application.

The following list details the files created:

Note: To be compatible with all operating systems, the names for all these files are upper case characters. For a complete backup, be sure these files are saved when appropriate (i.e., backed up) and used for recovery if necessary. However, except for FAIRCOMS.FCS, do NOT include these files in your dynamic dump script.

- System Status Log - When the application starts up, and while it is running, the transaction management logic keeps track of critical information concerning the status of the application (e.g., when it started, whether any error conditions have been detected, and whether it shuts down properly). This information is saved in chronological order in a system status log, named CTSTATUS.FCS.

- Administrative Information Tables - FairCom Server ONLY. The FairCom Server creates and uses FAIRCOM.FCS to record administrative information concerning users and user groups.

-

Transaction Management Files - The transaction management logic creates the following four files for managing transaction processing:

- I0000000.FCS

- D0000000.FCS

- S0000000.FCS

- S0000001.FCS

- It is important to safeguard these files, especially the two whose names begin with ‘S’.

-

Active Transaction Logs - Information concerning ongoing transactions is saved on a continual basis, in a transaction log file. A chronological series of transaction logs is maintained during the operation of the application. Transaction logs containing the actual transaction information are saved as standard files. They are given names in sequential order, starting with L0000001.FCS (which can be thought of as active transaction log, number 0000001) and counting up sequentially (i.e., the next log file is L0000002.FCS, etc.).

- By default, the transaction management logic saves up to four active logs at a given time. When there are already four active log files and another is created, the lowest numbered active log is either deleted or saved as an inactive transaction log file, depending on how the FairCom Server is configured (see the term “Inactive transaction logs” below).

- Every new session begins with the application checking the most recent transaction logs (i.e., the most recent 4 logs, which are always saved as “active” transaction logs) to see if any transactions need to be undone or redone. If so, these logs are used to perform automatic recovery.

- Inactive transaction logs - Transaction logs that are no longer active (i.e., they are not among the four most recent log files) are either deleted or saved as inactive transaction log files when new active log files are created. The choice of deleting old log files or saving them as inactive log files is made when configuring the Server (see the KEEP_LOGS configuration option). The number of active single user transaction logs is discussed in Single-User Transaction Processing.

An inactive log file is created from an active log file by renaming the old file, keeping the log number (e.g., L0000001) and changing the file’s extension from .FCS to .FCA. The application Administrator may then safely move, delete, or copy the inactive, archived transaction log file.

When an apparently old log file is encountered while transaction processing attempts to create the next log file (e.g., log 5 is about to be created, but a log 5 already exists), FairCom DB attempts to first rename the old log (from .FCA to .FCQ) and then attempts to create the new log. If this succeeds, then the system continues without interruption (although an entry is made in CTSTATUS.FCS and on server systems a notice of this event is routed to the console). If the renaming does not cure the problem then a NLOG_ERR (498) will be returned and the system will terminate due to a failed transaction write.

Note: The *.FCA files should be saved for use in cases when the .FCS files are needed for a backup. In the event of a system failure, be sure to save all the system files (i.e., the files ending with .FCS). CTSTATUS.FCS may contain important textual information concerning the failure.

When there is a system failure due to power outage, there are two basic possibilities for recovery:

- When the power goes back on, the system will use the existing information to recover automatically, or

- The Administrator will need to use information saved in previous backups to recover (to the point of the backup) and restart operations.

Automatic Log Adjustments

In extreme circumstances, the size of the logs will expand or the number of active logs will increase. A very large transaction, perhaps with a large variable-length record, may require a single log file to expand to hold the entire transaction. A transaction that takes a long time to commit or abort may require the number of active logs to increase. Both of these conditions are temporary, and are automatically handled by the FairCom Server.

Automatic Log Size Adjustment

Long variable-length data records can cause transaction logs to roll over so fast that checkpoints are not properly issued. By default, the FairCom Server automatically adjusts the size of the log files to accommodate long records. As a rule of thumb, if the record length exceeds about one sixth of the individual log size, the size will be proportionately increased. When this occurs, CTSTATUS.FCS receives a message with the approximate new aggregate disk space devoted to the log files.

To disable this adjustment, add FIXED_LOG_SIZE YES to the FairCom Server configuration file. A single-user TRANPROC application can disable the adjustment by setting the global variable ctfixlog to a non-zero value.

Note: When disabled, a record which would have caused an adjustment results in error TLNG_ERR (654) when it is written to a file supporting full transaction processing. It does not apply to pre-image files unless they are part of a dynamic dump with PREIMAGE_DUMP turned on.

ctLOGRECLMT defaults to 96MB in ctclb3.c. If a record exceeds this length and log adjustment is not permitted, the log is not adjusted. An entry is made in CTSTATUS.FCS indicating the approximate record length in megabytes.

Automatic Increase of Active Transaction Logs

If a committed transaction gets its Commit() written to disk before being flagged for abandonment, it is still possible the transaction will not complete before the log containing the Begin() is made inactive. After writing the Commit(), the pre-image space still must be merged with the real files before the Commit() can return. If this is about to happen, the number of active log files is increased unless the FIXED_LOG_SIZE has been activated, in which case the system must terminate.

SystemConfiguration Log Space Reporting

SystemConfiguration() can return the current approximate space in MB for the log files. With the automatic adjustments to the log size and the number of active log files, log space can increase as the FairCom Server operates. cfgLOG_SPACE is the index into the array of LONGs filled in by SystemConfiguration().

SystemConfiguration Log Reporting Enhancements

SystemConfiguration() can return the data record length limit that triggers an increase in the size of the log files. Transaction controlled records longer than this limit increase the size of the log files or cause a TLNG_ERR (654) if the log space is fixed. cfgLOG_RECORD_LIMIT is the index into the array of LONGs filled in by SystemConfiguration(). The initial record length limit is approximately one fortieth, 1/40, of the total log space. The limit is reset each time the log size is adjusted.

Flush Directory Metadata to Disk for Transaction-Dependent File Creates, Deletes and Renames

When a file is created, renamed, or deleted, the new name of the file is reflected in the file system entry on disk only when the containing directory's metadata is flushed to disk. If the system crashes before the metadata is flushed to disk, the data for the file might exist on disk, but there is no guarantee that the file system contains an entry for the newly created, renamed, or deleted file. In a test case we noticed that after a system power loss a transaction log containing valid log entries still had the name of the transaction log template file.

In release V11 and later, c-tree ensures that creates, renames, and deletes of transaction log files and transaction-dependent files are followed by flushing of the containing directory's metadata to disk. This change also applies to other important files such as CTSTATUS.FCS, the master key password verification file, and files created during file compact operations (even if not transaction dependent).

To revert to the old behavior, add COMPATIBILITY NO_FLUSH_DIR to ctsrvr.cfg.

Automatic Recovery

Once you establish transaction processing you can take advantage of automatic recovery. Transaction logging places enough information in the transaction log files to ensure transactions can be undone or redone during automatic recovery.

If the application crashes for some reason (anything from a software problem to power failure of the hardware) while instructions are being processed, the application will detect that a problem has occurred the next time it starts up. The transaction management logic will then automatically reset the database back to the last, most complete state that it can, using the transaction log. Transactions committed before the crash will be redone, while those not yet committed will be undone.

Only those files that were created with a transaction mode of ctTRNLOG will be affected.

Automatic recovery also comes into play if the file system is heavily buffered (which dramatically improves performance). With buffering, updates that have been committed may still be sitting in a file buffer somewhere, not yet written to disk. This can be offset by using the file mode of ctWRITETHRU, but that may slow the system down noticeably. Don’t use ctWRITETHRU with ctTRNLOG files. ctWRITETHRU is not necessary because the database server can detect if these buffered transactions have not yet been written to the disk, and will use the transaction log to complete them.

When keeping memory use to a minimum is important, and when automatic recovery requires more FairCom DB file control blocks, set separate limits on the number of files used during automatic FairCom Server recovery and regular FairCom Server operation. Auto recovery may require more files than regular operation because during recovery, files once opened stay open until the end of recovery, which may not be the case with regular operation.

For the FairCom Server, the configuration keyword RECOVER_FILES takes as its argument the number of files to be used during recovery. If this is less than the number to be used during regular operation, (specified by the FILES keyword), the recovery files is set equal to the regular files, and the keyword has no affect. If the recovery files is greater than the regular files, at the end of automatic recovery the number of files is adjusted downward. This frees the memory used by the additional control blocks, ~900 bytes per each logical data file and index.

For non-server implementations, set the variable ctrcvfil to the number of files to use during recovery. In a ctNOGLOBALS implementation, ctrcvfil can only be accessed prior to initializing FairCom DB if the global structure has already been allocated by registering FairCom DB.

If automatic recovery fails on a FairCom Server because a file is missing or does not match the unique file ID, CTSTATUS.FCS receives a message stating SKIP_MISSING_FILES YES has been added to the FairCom Server configuration file. The automatic recovery continues in this case.

For a single-user TRANPROC application, a message about setting ctskpfil to one, (enabled), is placed in CTSTATUS.FCS. When missing files are skipped, the listing of the skipped files indicates the type of log entry that triggered the skipped files message:

- RCVchk - checkpoint log entry

- RCVopn - a file open log entry

- RCVren - a file rename log entry

The RCVchk or RCVopn could potentially indicate a serious problem, unless of course the file reported has been intentionally deleted or moved. The RCVren value most likely indicates the old or original file has not been located. Of course, if the listed file is the new file this could present a serious problem.

Transaction High-Water Marks

The FairCom transaction processing logic, used by the FairCom Server and in single-user transaction processing, uses a system of transaction number high-water-marks to maintain consistency between transaction controlled index files and the transaction log files. When log files are erased, the high-water-marks maintained in the index headers permit the new log files to begin with transaction numbers which are consistent with the index files.

For more information regarding transaction high-water marks, please refer to Transaction High-Water Marks.

Transaction Processing On/Off

Transaction processing control can be dynamically enabled and disabled for data and index files. UpdateFileMode() can modify the transaction processing characteristics of a file. By putting ctTRNLOG in the file mode argument of UpdateFileMode(), transaction processing is enabled. By omitting ctTRNLOG in the call to UpdateFileMode(), transaction processing is disabled. However, an index file must be initially created with ctTRNLOG in the file mode to switch back and forth. If an index file is not originally created with ctTRNLOG, it must be rebuilt from scratch to enable ctTRNLOG. One reason to disable ctTRNLOG support might be to run a large batch processing program, after which ctTRNLOG support is re-enabled. The file must be opened exclusively for UpdateFileMode(). The following code is an example of removing ctTRNLOG from the file filno.

if (UpdateFileMode(filno,ctPERMANENT|ctEXCLUSIVE|ctFIXED))

printf("\nFile Mode Error %d",uerr_cod);Note: This is a low-level function that must be called for each physical file in an ISAM set. It will only update one file at a time.

Two-Phase Transactions

Two-Phase transaction support allows, for example, a transaction to span multiple servers. This is useful for updating information from a master database to remote databases in an all-or-nothing approach.

To start a transaction that supports a two-phase commit, you would include the ctTWOFASE attribute in the transaction mode passed to the Begin() function. Call the TRANRDY() function to execute the first commit phase, and finally Commit() to execute the second commit phase.

Note: You may need additional caution with regard to locking and unlocking of records as your transactions become more complex in a multi-server environment to avoid performance problems.

Example

(Note that this example could also use individual threads of operation for the different c-tree Server connections, avoiding the c-tree instance calls.)

COUNT rc1,rc2,filno1,filno2,rcc1,rcc2;

TEXT databuf1[128],databuf2[128];

/* Create the connections and c-tree instances */

...

if (!RegisterCtree("server_1")) {

SwitchCtree("server_1");

InitISAMXtd(10, 10, 64, 10, 0, "ADMIN", "ADMIN", "FAIRCOMS1");

filno1 = OPNRFIL(0, "mydata.dat", ctSHARED);

FirstRecord(filno1, databuf1);

memcpy (databuf1, "new data", 8);

/* Prepare transaction on c-tree server 1 */

Begin(ctTRNLOG | ctTWOFASE | ctENABLE);

ReWriteRecord(filno1, databuf2);

rc1 = TRANRDY();

}

if (!RegisterCtree("server_2")) {

SwitchCtree("server_2");

InitISAMXtd(10, 10, 64, 10, 0, "ADMIN", "ADMIN", "FAIRCOMS2");

filno2 = OPNRFIL(0, "mydata.dat", ctSHARED);

FirstRecord(filno2, databuf2);

memcpy (databuf2, "new data", 8);

/* Prepare transaction on c-tree server 2 */

Begin(ctTRNLOG | ctTWOFASE | ctENABLE);

ReWriteRecord(filno2, databuf2);

rc2 = TRANRDY();

}

/* Commit the transactions */

if (!rc1 && !rc2) {

SwitchCtree("server_1");

rcc1 = Commit(ctFREE);

SwitchCtree("server_2");

rcc2 = Commit(ctFREE);

if (!rcc1 && !rcc2) {

printf("Transaction successfully committed across both servers.\n");

} else {

printf("One or more units of the second commit phase of the transaction failed: rcc1=%d rcc2=%d\n", rcc1, rcc2);

}

} else {

printf("One or more of the transactions failed to be prepared: rc1=%d rc2=%d\n", rc1, rc2);

printf("Pending transactions will be aborted.\n");

SwitchCtree("server_1");

Abort();

SwitchCtree("server_2");

Abort();

}

/* Done */

SwitchCtree("server_1");

CloseISAM();

SwitchCtree("server_2");

CloseISAM();

Note: Two-Phase transactions can become extremely difficult to debug should there be communications problems between servers at any time during the second commit phase. This can result in out of sync data between the servers as one server may have committed while another server failed. It is always appropriate to check the return codes of the individual Commit() functions to ensure a complete successful transaction commit across multiple servers.

User Defined Transaction Log Entries

The FairCom DB transaction logs contain information detailing the complete history of a user transaction. These logs guarantee that in the event of a catastrophic FairCom DB failure (e.g. a power failure) the existing data and index files can be brought back to a consistent state when the server restarts. The transaction logs also allow the server to make online backups via the c-tree Dynamic Dump feature.

FairCom DB permits a client to connect and read the transaction logs directly through a new API, useful in replicating transactions across servers. There are situations where it would be useful for a user to insert their own entries into the transaction logs.

TRANUSR() permits users to make their own entries in the transaction log. This function is designed for only the most advanced users, and will be of limited value unless the user has a facility to read the transaction logs. The long name of this function is UserLogEntry().

Immediate Independent Commit Transaction (IICT)

The Immediate Independent Commit Transaction, IICT, permits a thread with an active, pending transaction to also execute immediate commit transactions, even on the same physical file that may have been updated by the still pending (regular) transaction. An IICT is essentially an auto commit ISAM update, but with the added characteristic that an IICT can be executed even while a transaction is pending for the same user (thread).

It is important to note that the IICT is independent of the existing transaction: it is as if another user/thread is executing the IICT. The following pseudo code example demonstrates this independence:

Example

- Begin transaction

- ISAM add record R1 with unique key U to file F

- Switch to IICT mode

- ISAM add record R2 with unique key U to file F: returns error TPND_ERR (420)

If we did not switch to IICT mode, the second add would have failed with a KDUP_ERR (2); however, the IICT mode made the second add use a separate transaction and the second add found a pending add for key U, hence the TPND_ERR. Just as if another thread had a key U add pending.

A data file and it's associated indices are put into IICT mode with a call

PUTHDR(datno,1,ctIICThdr)and are restored to regular mode with a call

PUTHDR(datno,0,ctIICThdr)It is possible in c-tree for a thread to open the same file in shared mode more than once, each open using a different user file number. And it is possible to put one or more of these files in IICT mode while the remaining files stay in regular mode.

Note: If a file has been opened more than once by the same thread, then the updates within a (regular) transaction made to the different file numbers are treated the same as if only one open had occurred.

These special filno values enable specific IICT operations:

- ctIICTbegin -1

- ctIICTcommit -2

- ctIICTabort -3

IICT File Create Example

TRANBEG(ctTRNLOG|ctENABLE);

if ((rc = PUTHDR(ctIICTbegin, ctTRNLOG, ctIICThdr))) {

printf("Error: Failed to switch into IICT mode: %d\n", rc);

goto err_ret;

}

if ((rc = CREIFILX8(&vcustomer, NULL, NULL, 0L, NULL, NULL, xcreblk))) {

printf("Error: Failed to create files: %d\n", rc);

goto err_ret;

}

if ((rc = PUTDODA(vcustomer.tfilno, doda, 7))) {

printf("Error: Failed to add DODA to file: %d\n", rc);

goto err_ret;

}

CLIFIL(&vcustomer);

if ((rc = PUTHDR(ctIICTcommit, 0, ctIICThdr))) {

printf("Error: Failed to switch out of IICT mode: %d\n", rc);

goto err_ret;

}

if ((rc = TRANABT())) {

printf("Error: Failed to abort transaction: %d\n", rc);

goto err_ret;

}

if ((rc = OPNIFIL(&vcustomer))) {

printf("Error: Failed to open files after committing IICT and aborting tran: %d sysiocod=%d\n", rc, sysiocod);

goto err_ret;

}

printf("Sucessfully opened files after committing IICT and aborting tran.\n");IICT Record Add Example

if ((rc = OPNIFIL(&vcustomer))) {

printf("Error: Failed to open files: %d\n",

rc);

goto err_ret;

}

datno2 = vcustomer.tfilno;

if ((rc = PUTHDR(datno2, 1, ctIICThdr))) {

printf("Error: Failed to switch into IICT mode for file: %d\n",

rc);

goto err_ret;

}

/* start general IICT */

if ((rc = PUTHDR(ctIICTbegin, ctTRNLOG, ctIICThdr))) {

printf("Error: Failed to switch into IICT mode: %d\n",

rc);

goto err_ret;

}

/* add record to file with file-specific IICT enabled */

memset(recbuf, 'c', reclen);

if ((rc = ADDVREC(datno2, recbuf, reclen))) {

printf("Error: Failed to add record: %d\n",

rc);

goto err_ret;

}

/* complete the IICT */

if ((rc = PUTHDR(ctIICTcommit, 0, ctIICThdr))) {

printf("Error: Failed to switch out of IICT mode: %d\n",

rc);

goto err_ret;

}

if ((rc = TRANABT())) {

printf("Error: Failed to abort transaction: %d\n",

rc);

goto err_ret;

}

if ((rc = FRSVREC(datno2, recbuf, &reclen))) {

printf("Error: Failed to read record: %d\n",

rc);

goto err_ret;

}

Single-User Transaction Processing

FairCom DB includes single-user transaction processing, which provides some of the same features to ensure data-integrity that are found in FairCom Server, such as full automatic recovery and log dump utilities.

Single User Transaction Processing Control

The single user transaction processing logic includes the same robust features found with FairCom Server, including full automatic recovery and log dump utilities. The following information is included for the single user transaction processing developer who has minimal hard drive space available for the log files. The following defines control the quantity and size of the transaction log files. The values listed are the default defines and the minimum recommendations.

Note: If defines are changed, you must rebuild the FairCom DB library, recompile and relink your application.

| Define | Location | Default | Minimum |

|---|---|---|---|

| LOGPURGE | cttran.h | 4 | 3 |

| LOGCHUNKX | cttran.h | 1100000L | 380000L |

| LOGCHUNKN | cttran.h | 550000L | 190000L |

| MINCHKLMT | ctinit.c | 75000L | 50% of LOGCHUNKN |

| MINLOGSPACE | ctinit.c | 2000000L | 750000L |

Deviating from the default or minimum recommendations is done so at the user’s own risk. If deviating from the above defines:

- The LOGPURGE define is the number of active transaction log files.

- The MINLOGSPACE is the total space to be used by all active transaction log files.

- LOGCHUNKX is derived by roughly dividing MINLOGSPACE by 2.

- LOGCHUNKN is derived by roughly dividing LOGCHUNKX by 2.

- MINCHKLMT is the smallest number of bytes permitted between checkpoints.

The *.FCS files may be removed if a clean shutdown is performed. A clean shutdown is performed by issuing a CloseISAM() with no active transactions pending.

To modify the number of inactive log files which are maintained (default is zero), add “extern int ct_logkep;” to your application, if not using ctNOGLOBALS. If ctNOGLOBALS is defined, it will already be properly declared. Set ct_logkep to -1 if you wish to keep all inactive logs, or set ct_logkep to the number of inactive log files you wish to keep. Default for ct_logkep is 0, which indicates to use the default transaction log behavior which is to save the most current 4 (including the active) transaction logs.

Clear Transaction Logs

It is optionally permitted for single-user transaction processing applications to remove S*.FCS and L*.FCS upon a successful shutdown. The FairCom DB checkpoint code determines at the time of a final checkpoint if there are no pending transactions or file opens, and if the user profile (see InitInitISAMXtd in the function reference section) has the USERPRF_CLRCHK bit turned on. If so, the S*.FCS files are deleted, and the current L*.FCS files are deleted. The USERPRF_CLRCHK option is off by default.

Note: If the application is causing log files to be saved (very unusual for a single-user application), the files are not cleared.

If the log files are cleared, the following sequence MAY lead to error HTRN_ERR (520):

- Begin()

- Open a transaction-controlled index

- Update index

That is, opening the index within a transaction and then updating the index could cause a conflict with FairCom DB’s internally maintained transaction high-water mark.

Log Paths

SETLOGPATH() sets the path for the transaction processing log files, start files and temporary support files for single-user TRANPROC applications.

Call SETLOGPATH() before the actual initial call to FairCom DB, i.e., before the call to InitCTree(), InitISAM(), etc. It does not set uerr_cod, but returns the error code or zero if successful. If FairCom DB is shutdown and restarted within an application, SETLOGPATH() must be repeated just prior to each initial call to FairCom DB.

If ctNOGLOBALS is used, the instance must be registered with RegisterCtree() before SETLOGPATH() is called.

See SETLOGPATH for additional information.

Additional Single-User Transaction capabilities

The following additional single-user controls are available. Server configuration file keywords are repeated prior to the method for implementing in single-user mode:

- It is possible to load one or more transaction logs into memory during automatic recovery to speed the recovery process. The keyword RECOVER_MEMLOG may be placed into the configuration file with an argument, which specifies the maximum number of memory, logs to load into memory during automatic recovery (default is 0).

RECOVER_MEMLOG <# of logs to load>- In FairCom DB single-user, transaction processing mode, the global variable ctlogmem should be set to one (1), and ctlogmemmax should be set to the maximum number of logs to load into memory.

- The CHECKPOINT_INTERVAL keyword can speed up recovery at the expense of performance during updates. The interval between checkpoints (transaction processing only) is measured in bytes of log entries. It is ordinarily about one-third (1/3) the size of one of the active log files (L000....FCS). Reducing the interval will speed up automatic recovery at the expense of performance during updates (default is 833333).

CHECKPOINT_INTERVAL <interval in bytes>- For example, adding CHECKPOINT_INTERVAL 150000 to the configuration file will cause checkpoints about every 150,000 bytes of log file.

- In FairCom DB single-user, transaction processing mode set the LONG global variable ctlogchklmt to the desired value.

- Faster index automatic recovery is available through the index file mode, ctLOGIDX. Transaction controlled indexes with this file mode are recovered more quickly than with the standard transaction processing file mode ctTRNLOG. This feature can significantly reduced recovery times for large indexes and should have minimal affect on the speed of index operations.

ctLOGIDX is only meaningful if the file mode also includes ctTRNLOG. Note that ctLOGIDX is intended for index files only! Do not use ctLOGIDX with data files.

ctLOGIDX must defined prior to building single-user FairCom DB library.

ctLOGIDX support may be forced on, off or disabled with the FORCE_LOGIDX server configuration file entry.

FORCE_LOGIDX <ON | OFF | NO>

- ON forces all indexes to use the ctLOGIDX entries;

- OFF forces all indexes not to use ctLOGIDX entries;

- NO allows existing file modes to control the ctLOGIDX entries and is the default.

In FairCom DB single-user transaction processing mode, set the global variable ctlogidxfrc as follows: 1 for ON, 2 for OFF and 0 for NO. If ctNOGLOBALS is in use, then either the CTVAR structure must be allocated, (typically by calling RegisterCtree()), prior to the FairCom DB initialization call, (so that the member corresponding to ctlogidxfrc can be set), or setting ctlogidxfrc must be delayed until after the initial call to FairCom DB. If delayed, then turning ctLOGIDX entries off (ctlogidxfrc == 2) cannot be done until after the initialization call and its possible need for automatic recovery.

Single-user Transaction processing hard coded file zero conflict

A significant improvement, introduced in v6.8, alleviated the need for an application to “know-up-front” the number of FairCom DB data/index files required for c-tree initialization. Internally, this feature is referred to as ctFLEXFILE. When the default ctFLEXFILE option is combined with the single-user transaction-processing model in an application using a hard coded file number of zero, there is a potential for file zero numbering conflict.

File numbers can be assigned either by the application developer (this is, hard coded) or dynamically by FairCom DB when the application developer uses -1 instead of hard coding the number in the file create or open call. The preferred method is to let FairCom DB assign the file numbers.

If your application must use file zero (0), use the ‘#define ctBEHAV_TranFileNbr’ to avoid file-numbering conflicts.

If ctFLEXFILE is defined and single-user TRANPROC is defined, then an internal transaction related file might have its file number assigned in the middle of the subsequent file number range if the ctFLEXFILE processing increases the number of active c-tree FCBs after automatic recovery processing. This modification causes the internal file number to come at the beginning of the file number range unless ctBEHAV_TranFileNbr is defined. If the BEHAV define is on, then the behavior stays the same as the original ctFLEXFILE code release.

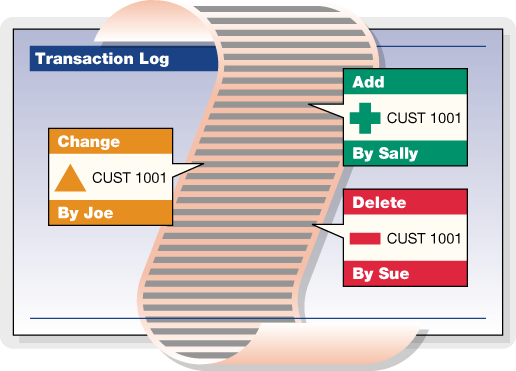

Transaction History

TransactionHistory() accesses audit logs of transaction controlled files to add valuable historical capabilities in any application. This allows the programmer to examine the changes performed on each unit of information at a very detailed level.

One such use of this technology is to track the changes made to a manufactured device as it proceeds through an automated fabrication factory. The exact history of each individual manufactured device can be determined from the transaction log files.

The following types of information can be gathered from the transaction logs:

- A particular record position of a specified data file.

- A particular, unique key value of a specified index.

- All updates to a specified file.

- Updates from particular user ID and/or node name.

- Some combination of the above.

TransactionHistory Basic Operation

TransactionHistory() can be used on-line, interrogating current files and transaction logs as part of an active application. It can also be used off-line, examining a set of data, index, and log files saved from a single-user or client-server application. When scanning backward, TransactionHistory() looks for both active log files, ending with .FCS, and for inactive log files, ending with FCA.

TransactionHistory() makes four types of calls. The first two are search calls returning log entries. The third specifies a beginning log number, and the last resets the history state, permitting a new set of history calls. These calls can be characterized as follows:

| First search call | Specifies the search characteristics and the return information type. This call returns the first entry in the log satisfying the search criteria. |

| Subsequent search calls | Returns the next entry satisfying the search criteria. Do not specify the search criteria or the return information type. These are specified in the first search call. Specify an output buffer address and length on every search call. |

| Preliminary log call | Only specifies a beginning log number. |

| Terminating call | Cleans up the current history set permitting a new set of history calls starting with a new first search call. |

For on-line use, make a first search call, then make subsequent search calls. An on-line search works backward through the files, beginning with the current log position.

For an off-line utility, use TransactionHistory() almost the same as an on-line search, except an optional preliminary log call can specify the starting log number. Off-line searches may be either forward or backward through the logs. While backward searches are the most common, if additional log files have accumulated since the data and index files were saved, a forward search can be meaningful.

Beginning with V6.7, the file create, open, and close entries in the log carry a time stamp permitting TransactionHistory() to locate the appropriate position in the log to begin off-line searches. However, when not specifying an explicit file, the beginning log number, set with a preliminary log call, helps narrow the TransactionHistory() search.

When TransactionHistory() returns a non-zero error code, it automatically frees internal memory allocated to the current history set and resets the history status to allow a new history set. No terminating call is required. When no more information in the transaction logs satisfies the search criteria, TransactionHistory() returns HENT_ERR (618). A first search call made before the log entries for the current history set are exhausted and before a terminating call is made returns HMID_ERR (622).

To switch to a new set of history calls before exhausting all log entries in the current history set, either terminate the existing history set, or use multiple history sets. See Multiple History Sets under Advanced Operations.

Preliminary log calls and terminate calls appear as follows:

TransactionHistory(-1, (pVOID)0, (pVOID)0, recbyt, (VRLEN)0, ctHISTlog);Where recbyt is set to the beginning log number on a preliminary log call, or where recbyt is set to 1L to terminate the current history set.

Subsequent search calls appear as follows:

TransactionHistory(-1, (pVOID)0, bufptr, (LONG)0, bufsiz, ctHISTnext);Where bufptr points to the output buffer and bufsiz specifies the length of bufptr.

A first search call mode includes ctHISTfirst. If searching forward through the logs, ctHISTfrwd must be OR-ed into mode, otherwise it defaults to backward. The three types of matching or search criteria include:

| ctHISTpos | Matches the data record position, with a recbyt of zero matching all data record positions. |

| ctHISTkey | Matches the key value, with a null target matching all key values. |

| ctHISTuser and/or ctHISTnode | Matches user ID or node name respectively, with an empty string target matching all user ID’s. |

A first search call must use exactly one of these three matching criteria: (1) ctHISTpos, (2) ctHISTkey, or (3) one or both of ctHISTuser and ctHISTnode. Any of the three can be used to specify the search criteria over specific files, signified by a non-negative filno). In a search over all files, signified by a filno of -1, ctHISTpos and ctHISTkey are not used, but one or both of ctHISTuser and ctHISTnode must OR-ed in. In all cases, either ctHISTdata or ctHISTindx must specify the return type. The following table gives the interpretation of mode, filno, target, and recbyt in the first search call:

Note: The first 6 column headings in the following table should be prefaced with ctHIST, i.e., pos is ctHISTpos, key is ctHISTkey, etc.

Possible First Search Call Combinations

| p o s |

k e y |

u s e r |

n o d e |

d a t a |

i n d x |

filno | target | recbyt | interpretation |

|---|---|---|---|---|---|---|---|---|---|

| a | a | x | -1 | userID | zero | Return all data entries for all data files updated by matching user. | |||

| a | a | x | -1 | userID | zero | Return all index entries for all index files updated by matching user. | |||

| x | x | Data | NULL | zero | Return all data entries for specified data file. | ||||

| x | x | Data | NULL | non-zero | Return data entries matching recbyt for specified data file. | ||||

| a | a | x | Data | userID | zero | Return all data entries for specified data file made by matching user. | |||

| a | a | x | Data | userID | non-zero | Return data entries matching recbyt for specified data file made by matching user. | |||

| x | x | Indx | key | zero | Return all data entries with index matching key*. | ||||

| x | x | Indx | key | non-zero | Return all data entries with index matching key and recbyt**. | ||||

| x | x | Indx | NULL | zero | Return all index entries for specified index file. | ||||

| x | x | Indx | NULL | non-zero | Return all index entries for specified index file which match recbyt. | ||||

| x | x | Indx | NULL | non-zero | Return all data entries with index matching recbyt for specified index file. | ||||

| a | a | x | Indx | userID | zero | Return all index entries for specified index file made by matching user. | |||

| a | a | x | Indx | userID | non-zero | Return all index entries for specified index file that match recbyt and made by matching user. |

Note:

| x | Indicates the bit is turned on in mode. |

| a | (active) indicates one or more of these bits is turned on. |

| * | Index matching key means the key value for the data record matches target for the specified index file. |

| ** | an index entry matching recbyt means the index entry points to a record offset matching recbyt. |

Two additional mode bits may be OR-ed in: ctHISTinfo and ctHISTnet.

- ctHISTinfo returns the user ID and node name of the process which made the log entries in the form of a null terminated ASCII string with a vertical bar (‘|’) preceding the node name, if present. This user information is in addition to the data or index information requested.

- For searches of specific files with specific matching criteria, i.e., a non-null target for an index or a non-zero recbyt for a data file, ctHISTnet returns only the net affect of each transaction, not each individual update within the transaction.

When mode includes ctHISTuser or ctHISTnode, pass the user ID in target as a null terminated ASCII string. If only ctHISTuser is on, target is case insensitive, corresponding to the user ID’s specified during logon to a FairCom Server. If only ctHISTnode is on, target is case sensitive, corresponding to the node name set by SETNODE(). If both ctHISTuser and ctHISTnode are on, target is a single null-terminated composite string beginning with a case insensitive user ID, a vertical bar (‘|’), and a case sensitive node name. To match all users, turn on ctHISTuser and set target to an empty string (“”), not a null pointer. If no user ID exists, TransactionHistory() appends a null byte to the data or index information.

The Transaction History feature is enabled by default, but may be disabled by adding #define NO_HISTORY to ctoptn.h/ctree.mak.

TransactionHistory Output

As outlined above, the output buffer contains the following information after a successful first search call or subsequent search call:

- A 40-byte history header.

- An optional record header for variable-length data records, superfile member data records, and resources in fixed or variable-length data files.

- The key value or data record entry.

- A null terminated string with the user ID and node name of the process which made the log entry, when requested using ctHISTinfo.

- A null terminated file name, when filno equals 1.

The TransactionHistory() header is an instance of the 40-byte HSTRSP structure, defined in ctport.h, composed of the following fields:

LONG tranno; /* transaction number */

LONG recbyt; /* log entry data record byte offset */

LONG lognum; /* the log number for the entry */

LONG logpos; /* the offset within the log for the entry */

LONG imglen; /* the length of the data or key value returned */

LONG trntim; /* the time stamp for the transaction */

LONG trnfil; /* the internal file number for the log entry */

LONG resrvd; /* reserved for future use */

UCOUNT membno; /* the index member number */

UCOUNT imgmap; /* details concerning the data returned */

COUNT trntyp; /* the type of transaction log entry */

COUNT trnusr; /* internal user number which made the entry */Each data and index entry in a transaction log belongs to a particular transaction. Each such transaction is assigned a unique number. This transaction number is returned in tranno. Each data and index entry is for a particular file at a particular data record location. recbyt contains this data record location, expressed as a byte offset from the beginning of the file. trnfil contains the unique file number assigned to each data and index file upon their creation or actual opening. Each time a file is opened, it is assigned a new file number. trntim contains the commit time of the transaction. In the event that this commit time cannot be determined, it is set to zero.

It is very important to note that more than one actual log entry may be combined in order to return an image of a data record. Variable-length data records and resources are composed of a header and the actual data. These components are written to the log separately, and must be combined to generate a coherent data entry. Further, a scan of the log based on an index file returning the corresponding data information may require more than one log entry to generate the returned data entry. Therefore, the lognum, logpos, and trntyp fields in the history header reflect only one of possibly several log entries combined to create the return information.

The imglen field contains the length of the key value or data record image returned in the output buffer just following the 40-byte history header. imglen includes the length of an optional record header before the data or resource image, if it is present. imglen does not include the length of the user ID and/or file name appended to the end of the key value or data record image. These null terminated ASCII string fields follow immediately after the key or record image.